What will you learn?

The video below, delivers the main points of this blog post on Stochastic Gradient Descent (SGD): (GitHub code available in here)

In our previous 2 posts about gradient, in both post number 1 and post number 2, we did cover gradient descent in all its glory. We even went through a very detailed numerical example just to make sure we are totally convinced by the whole thing!

By the end of this post, you will learn how Stochastic Gradient Descent (SGD) works. You will visualize loss surfaces, hypothesis spaces, and you will also generate this cool animation of SGD travelling on the loss surface 😉

Today we will learn about the drawbacks of gradient descent, of which we have 2, and we will see how we can overcome these problems by not following gradient descent exactly, but by following a stochastic approximation of it, also known as , the Stochastic Gradient Descent (SGD) algorithm. We will travel with SGD in the hypothesis space of model parameters and see how SGD finds the optimal values for the learnable parameters which would correspond to the global minimum value of loss. I am ready when you are 😉

What is wrong with gradient descent?

So, we have learned that gradient descent is nothing but a search algorithm:

- Search where? The hypothesis space of all possible values for our learnable parameters.

- Search what? The optimal values for the learnable parameters of our model, which would minimize the loss.

However, we cannot use gradient descent to search any arbitrary hypothesis space! In fact some properties have to be in place before we could use this amazing search algorithm:

- The hypothesis space must contain continuously parametrized hypotheses. So, an example of such a hypothesis space, is the space of all possible weight values in a neural network. These weights are the parameters of your neural network—hence the word “parametrized”—and these weights can take continuous positive or negative values—hence the word “continuous”.

- We need to be able to differentiate our loss function with respect to the parameters of this space. As we saw in our earlier posts on gradient descent, we need this differentiability if we would want to use gradient descent and search a gigantic hypothesis space in a smarter and more guided way! This differentiation tells us:

how much we should increase or decrease a given weight parameter in our neural network, so our loss would decrease the fastest!

Of course this seems great as we are not totally blind, groping in an infinitely large space for the right weights for our neural networks (i.e., blind stupid training 😉 ). However, you need to know that gradient descent has some major issues! Let’s study them a little more closely:

- As you remember from our previous posts, in the traditional gradient descent, we would go over the entire dataset, and accumulate the entire gradients with respect to each learnable parameter (e.g., the weights in our neural network), and only after we have finished a complete sweep through the dataset, would we update these parameters. With traditional gradient descent, in order to find the global minimum in our loss, we might need to require many thousands of such gradient steps (i.e., going through the entire dataset many thousands of times).

- If there are many multiple local minima in the loss surface, then there is no guarantee that traditional gradient descent will be able to find the global minimum.

These problems are due to the fact that the traditional gradient descent can easily get trapped in any local minimum since it searches for the direction of the steepest descent on the loss surface as it finds the true gradient by going through the entire dataset, before updating the values of the parameters.

However, we need to be able to literally kick it out of those local minima, so it could explore more and not get trapped, so that maybe it could find the global minimum. In other words, we do not want the true absolute gradient vector, but a noisy estimation of it. That little imperfection actually plays in our favor and can help us NOT get trapped in a local minimum!

Stochastic Gradient Descent

Here is what we do in Stochastic Gradient Descent (SGD): For every single input data, the model computes the output. In the next step, the gradient of the error is computed with respect to each and every one of the learnable parameters in the model and according to an update rule, their values are immediately updated. It is only then that the next input data in permitted into the model. This will continue until the whole dataset is explored and fed to the model. In short, below we can see the algorithm for stochastic gradient descent:

Initialize each learnable parameter

to a small random value

Until the termination condition is met, do

For each pair of training example (

,

), do:

Feed

into the model and generate the output

Update each learnable parameter

:

In the above algorithm, , represents a given input data—It can have a certain dimensionality,

. And

represents the ground truth for the given input data. This is what the model strives to learn to predict! Here is something that might not strike you at first glance:

For every single input data,

, there is one separate loss surface!

What?! Hell yeah! I am going to show you how this happens.

Some Mathematical Pre-Requisites

In this section, I would like to define a model, that takes 1 scalar input, , and has 1 trainable parameter,

. By linearly combining them, the model produces the output

. Finally, I will define a loss function that takes the output of the model and computes the loss. For simplicity, this particular loss function does not need the ground-truth,

. This can happen in an unsupervised learning scenario!

I am sick of seeing that bloody bowl-shaped loss surface in every single machine learning book and blog (including my own blog)! Even though it is legit, I want to define a highly convex loss surface with loads of local minima and maxima. I want you to see how SGD will walk out of all of these traps and finds the global minimum!

So, let’s define our model’s output as a function of the input and the trainable weight parameter:

and our loss is computed as follows:

I know, it is a funny looking loss! I will show you how it looks like in the hypothesis space of possible values for our parameter . Of course, we will need to take the derivative of this loss with respect to our trainable parameter,

, so that SGD could actually update

, while minimizing the loss as fast as possible. So, this is the only mathematically heavy part of this post (I promise!):

From the Chain-Rule we know that:

And also, remember the multiplication rule for computing derivatives:

So, let’s first compute :

Using the multiplication rule, we have:

And we know that . I will leave the rest of the algebraic hassle to you (I believe in you ;-)), however, your final answer should be this:

Enough with the math! Let’s dive into some cool visualizations and some nice Python code.

Visualizing the Loss Surfaces

Let’s use Python to produce these cool visualizations. First, let’s import some packages:

Let’s now code the 2 most important functions. First one computes the loss for a given model output, . The second one, computes the derivative of the loss w.r.t the parameter

, given an input data,

:

We know that our loss function is a function of the input data, , and the learnable parameter,

. Given an input data, we can actually plot this surface! What is the name of this surface? It is actually the hypothesis space and the corresponding loss value for every value of the parameter

, in that space:

The hypothesis space, is the space of all possible values for our learnable parameters. In our case, this is a space of scalar values for our one and only learnable parameter,

! We would like to search in this space, and find a value for

, such that it minimizes our defined loss function. Stochastic Gradient Descent (SGD) is an optimization algorithm that searches the hypothesis space, by continuously updating the value of the parameters, in such a way that with every update, we would have the fastest descent in the current value of the loss.

Let’s take a look at some of the loss surfaces, given a few of the training data. For each training example, we will go over a grid of possible values of , and compute the loss for each value of

, given the fixed value of the input data.

If we have

input data, we will have

loss surfaces. It is like every data point, gives us a different perspective, a different way of looking, a different angle to see the loss surface corresponding to the hypothesis space, that is the possible values for the learnable parameters!

The following code produces 3 input data, and plots 3 loss surfaces for us, across the hypothesis space of .

The output of this code produces the following 3 loss surfaces:

I would like to show you, a 3-dimensional view of many of such surfaces! In other words, a visualization across a range of values for the input data , and the learnable parameter,

and the computed loss value corresponding to each pair of (

,

)! This shows you how much indeed, can the loss surface change, with even a small change in the value of

! The code below, creates a grid of values for

and

, computes the loss for each pair of (

,

) and visualizes a 3-dimensional plot:

We can see the output of the code below, from 2 different perspectives. Note that the global minimum is -0.76:

A very important point:

SGD will NOT take place on this 3D surface! Note that this is NOT our hypothesis space! For a given input data

, we will have a 2D plot (that we have shown in the previous plot)! That is on those individual surfaces (that show the loss value in our hypothesis space for the given constant input data

) that SGD will travel and search for the optimal value of

that can minimize the loss!

Perfect! Now we are going to do something really cool! We will define a dataset of scalar values, and feed each one into our model, produce the model output, and compute the loss. Then compute the gradient at a particular weight value and compute the new value for

, and call it

. By computing the corresponding loss value for

and

, we basically have the coordinates of the start and the end of the update vector for our parameter,

.

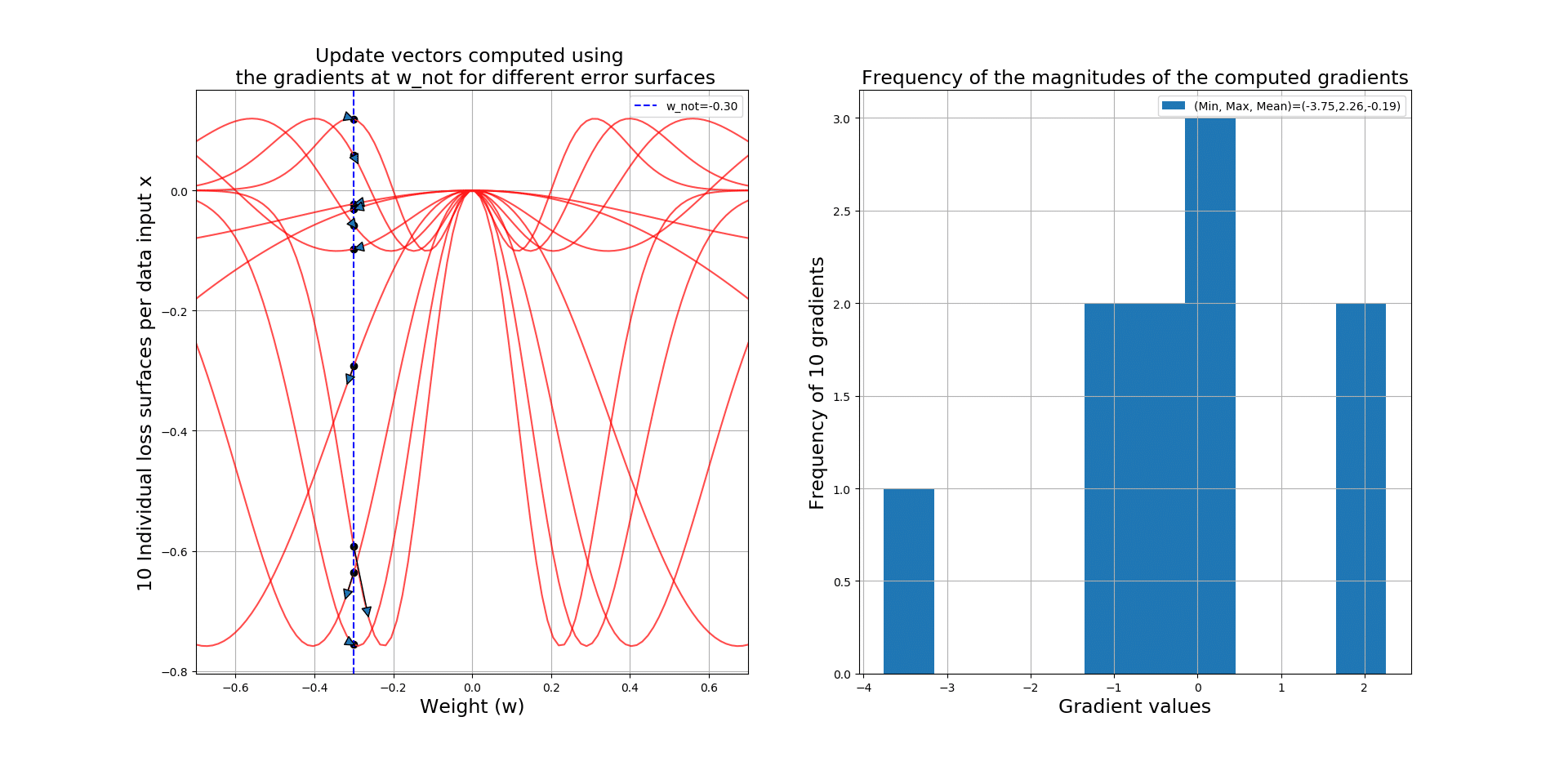

Given each data point, at point , we will compute a different gradient value, since the loss surface changes for every input example! So, if we have 100 input data, then we will have 100 loss surfaces, and 100 gradient values at

across all 100 loss surfaces. Using a histogram, we can gain some insight into the magnitudes of these gradients regarding their distribution, and some statistics like the minimum, maximum and average of them.

So when you hear people saying SGD is really noisy, it is because of the fact that, for every input data, you will have a different error surface and gradient, which you will use to update your parameters! However, this noise can be really helpful, to help you JUMP OUT of the local minima! In other words, it will literally KICK you OUT of the local minima with the hope that you could find the global minimum (i.e., -0.76 in our example).

Let’s take a look at the code that will generate 10 loss surfaces, plots the update vector starting at and ending in

:

The output gives you the gradient vectors over 10 different loss surfaces, along side a histogram giving you some insight into these gradients! See how noisy SGD can get!!!

Below you can see a zoomed-in version so you can see the actual update vectors:

Travelling with SGD

Here is the reward for all of you who have survived this far! Let’s apply the SGD algorithm, and search the hypothesis space for the best value for our learnable parameter , so that hopefully we could find the global minimum 0.76. The main part to focus on in the code below is that:

For every input data, after computing the gradient at current

, we will UPDATE

, as this is how SGD works! This way we will use the noise, imposed on the loss surface by every input example

.

Let’s travel with SGD on many loss surfaces and see how we will occasionally get trapped at some local minima but SGD, due to its stochasticity (where for EVERY data point we have a different loss surface), kicks us out of those minima! See how this will help us find the global minimum! The code below, initiates SGD and the travel in the hypothesis space:

See the beautiful generated animation below. Notice how we get stuck in local minima and even in a fully flat surface where gradient is 0, but the change in the loss surface kicks us of out of them.

Note that the current loss becomes 0.76 in the end, which is the same as our global minimum!

Conclusion

SGD iterates through the entire training set, for each input data, it updates the learnable parameters of the model according to the gradient with respect to those parameters. This iteration and sequential updates over all the training examples, provides a reasonable approximation to descending in the direction of the True Gradient which is computed and used in the traditional Gradient Descent algorithm. SGD provides an approximation of the true gradient vector, and its noisy by nature! We have seen how this noise can help us avoid getting trapped into the local minima, which is really important when the loss surface has many such local minima!

Responses